What is a Social Robot? Understanding the 'Cocktail Party' Problem

Humans are social creatures. More than any other animal, we devote a lot of precious time and energy to understand and regulate interactions with the people around us. It means we constantly read the social and emotional signals of others, and consciously or unconsciously regulate what signals we send to others. Our social brain allowed us to achieve what individuals could never: build effective teams, large companies, live in towns and cities, create nations with social securities and collective defence.

Not all robots will have to interact with humans as part of their standard operations, depending on what they were designed to do. But a large subset of robots do, either as their cores mission (e.g. hospitality robots, health care robots) or as a result of where they work (e.g. robots that do chores in private homes). As with large groups of humans, for those robots to successfully be adopted at scale and succeed in delivering the value they are technically capable of, they also have to fit in with this social fabric.

But first… what is a robot?

Before defining what a social robot is, let’s define what a robot is. I personally like Kaplan’s definition, which is what we’ll use here:

“A robot is an object that possesses three properties: (1) It is a physical object, (2) it is functioning in an autonomous and (3) in a situated manner.”

This means a robot is a programmed physical entity that perceives and acts autonomously within a physical environment which has an influence on its actions. In addition, the robot has a physical presence that it interacts with, that is to say it manipulates not only information but also physical objects.

Using this definition rules out purely digital systems such as chatbots or simple digital automation tools that only process information - they are not robots.

And now… what is a social robot?

At BLUESKEYE AI we define a social robot as one that is able to take into account the reality of its social surroundings as it makes decisions on how to act, without which it would not achieve its full potential. It is a robot that interacts with humans by following the behavioural norms expected by its users, using a social interface that sits between the robot’s believes, desires, and intentions action planning system and the user.

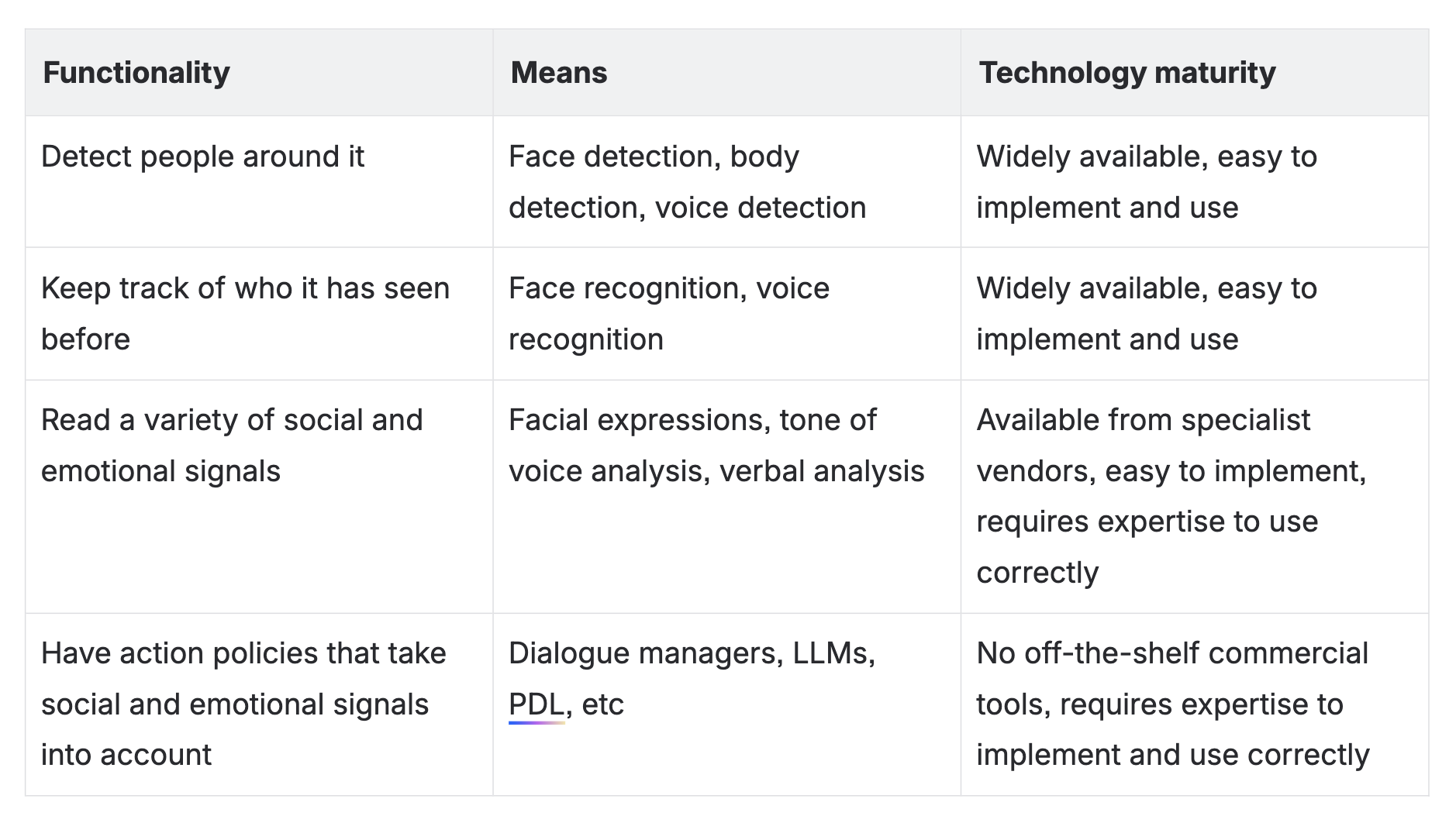

It is BLUESKEYE’s mission to fully understand the expressive behaviour of humans, and to transfer that understanding and measurement capability to the developers of social robots. A full understanding of human behaviour and all its complexities is of course a very long-term mission. Today, to be taken serious as a social robot, at its minimum it should:

These are all capabilities that are now readily available, for example they’re all included in BLUESKEYE AI’s B-Social product. Reading ‘a variety of social and emotional signals’ is of course a rather broad category. I would expect it to include, at the least the detection of: apparent emotion, subject of attention, level of engagement, speech activity, and non-verbal indicators of agreement or disagreement (e.g. head nodding/shaking). See What Is Expressive Behaviour Analysis? — BLUESKEYE AI for more information on what expressive behaviour analysis is.

Note that by this definition, a standard LLM like Gemini or Co-pilot is not a social (virtual) robot, firstly because it doesn’t detect people around it, and secondly because it doesn’t explicitly read and use social and emotional signals.

Of course, using LLMs deployed on a robot that take social and emotional signals as part of their input ('prompt') and thereby generate more empathetic or socially aware responses is one clear way of having an action policy that takes social and emotion signals into account. BLUESKEYE AI has proprietary technology for using LLMs in this way (see our Mood- and mental state-aware interaction with multimodal large language models patent ).

How far does the rabbit-hole go?

For many robots the need to be social is secondary to their primary function. For example, delivery robots that operate inside crowded buildings. For those, a limited set of social and emotional skills may be sufficient. However, for those robots whose primary function is entirely about human interaction a deeper understanding of human behaviour is required in order for them to achieve their full potential.

These are capabilities that are still very much topics of active research and development, both at BLUESKEYE AI and in other labs. Good examples are:

Self-awareness of the robot in terms of the social context

Full implementation of theory of mind

Understanding group dynamics including specific roles

Detecting and using aspects of social context such as it being a work or home environment

Understanding the level of familiarity between individuals

Taking into account personality traits of individuals

Understanding social hierarchies and power dynamics in small group interactions

There are probably more, and the topics will become more clearly defined as they are researched and operationalised for social robot deployment. Some aspects are partially addressed with existing technology. For example, some of the basic elements of theory of mind are covered by BLUESKEYE AI’s solution to the cocktail party problem (see below).

For more information about Social Robots, see e.g. Hegel et al.'s ‘Understanding Social Robots’ or Cynthia Breazeal’s ‘Designing Sociable Robots’.

What is the cocktail party problem

You may think that what a social robot needs first is to understand a person’s emotional and mental state so that a robot can act empathetically, especially if it relies heavily on dialogue to achieve its goals. However, from talking to most of the social robotic companies that are active today, BLUESKEYE AI concluded that there is a bigger, more pressing problem that needs to be solved first.

It’s called the Cocktail Party Problem and if you don’t solve it, your social robot is a non-starter. Literally.

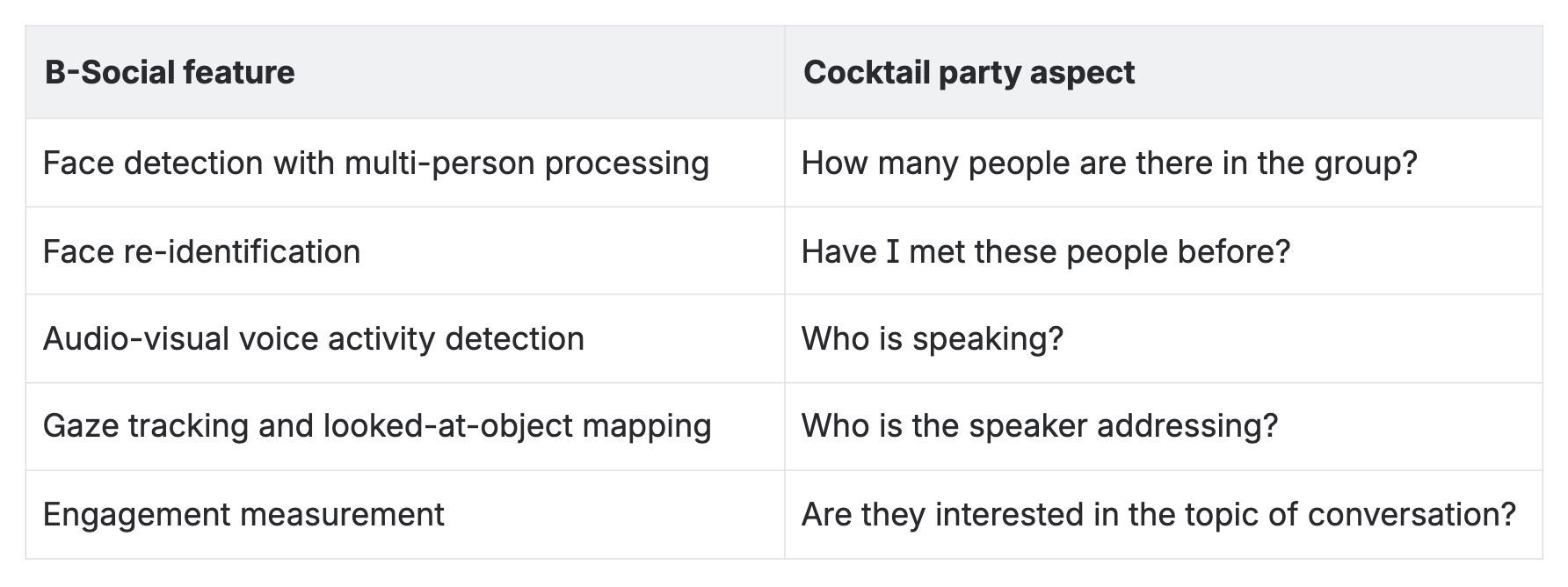

The cocktail party problem is named after that classical scenario where you’re at a party where small groups of people, typically 2-5 large, convene and have a brief conversation on a topic of common interest. Your goal is to mingle, get to know people, have some small talk, and leave a good impression. To truly engage, a social robot needs to know:

How many people, if any, are in front of me?

Who are they? Do I know them?

Who is speaking?

Who are they addressing?

What is the topic of conversation?

Are they interested in the current topic of conversation?

Imagine a robot that did not have the capacity to solve the cocktail party problem engaging in this task. Assuming it could still navigate to the right location of where a group of people are talking, it would approach and just start talking, probably over someone else. They might introduce themselves to someone they’ve already met before. Without knowing who a speaker is addressing a question to, they might answer it before the intended recipient of the question could. All in all, a robot without the social skills to navigate the cocktail party problem will cause annoyance and frustration. Your robot will not leave a good first impression. And that’s even before they could start with delivering whatever their main goal was.

The good news is that using BLUESKEYE AI’s B-Social SDK you can solve the cocktail party problem. Here’s how the B-Social features map to the different aspects of this problem:

Using these features, combined with your favourite LLM, your social robot can complete any small-talk conversation. From there, it is an easy step to weave in other behaviour understanding capabilities like apparent emotion recognition or attitude and intention estimation to achieve a truly empathetic experience for you customers that leave a long-lasting, positive memory.